When supporting some of the world’s largest and most successful SaaS companies, we at Wavefront by VMware get to learn from our customers regularly. We see how they structure their operations, how they implement their monitoring and automation policies, and how they use smarter alerts to lower mean time to identify and mean time to automate.

In this four-part blog series, I will:

- Share some of the things we’ve learned in how these companies use analytics to find hidden problems with cloud apps faster and more accurately

- Review a set of examples where customers have automated analytics for more actionable, ‘smarter’ alerting

Simple vs. Smart Alerting

First, what characterizes alerts that aren’t so smart? Well, they have a very simple way of looking at the world with thresholds that divide good from the bad. Simple alerts tend to work on univariate (one variable) data. They look at one data series at a time and tend to have “all or nothing” severity – either something is firing, or it’s not.

While simple alerts can be helpful in some use cases, they tend to create a lot of noise. There’s a lot of false positives and a lot of false negatives. Also, you can’t use relationships between different data sets. For example, you can’t use a ratio of one metric against another. It often happens that too many people end up getting involved in every issue – too many SREs and too many developers. As a result, the poor fit between the modern cloud architecture problem and the solution space in the form of simple alerting becomes very expensive for companies.

Smart alerts fix these problems. They use a more expressive language for defining and finding anomalies. An expressive language allows you to express actual relations between different data sets and not just individual behavior. Finally, you have a way to do targeted escalation – to get the right alert to the right team at the right time.

Figure 1. Simple vs. Smart Alerts

Severity Hierarchies Shape the DevOps Movement

One of the more interesting operational design patterns we see is that people don’t scale up their operations teams anymore. Rather, they scale out their development teams instead. All of our larger customers do this as there’s just too much complexity to have a large team of 30+ operations people handle the whole system. When they do this, the developers end up writing alerts using ‘self-serve analytics’. Why? Developers have intimate knowledge of their code. As they instrument their code, they deploy patches themselves without interference from operations, and they watch that code running live. To keep an eye on how things are working, they also create information notifications – not even alerts per se – to get a true sense of how their code behaves in production. That is, for their one piece of the overall system.

Now, there is way too much telemetry information for the operations teams to grok. The operations team doesn’t have the luxury to work on much more than notifications classified as severe or important events happening right now. They work on finding and fixing truly actionable things. Meanwhile, as more developers understand how their code runs in production, the more issues the company can avoid proactively before they become critical operational problems.

So, we see a spectrum across developers doing more informational alerts, watching their code in production – almost like a remote profile or a debugger – and operations watching problems right now. But to do this, you need a couple of things. First, you need a deep set of telemetry data across your entire stack. Then, you need a very powerful language to express anomalies that go far beyond simple thresholds. And this brings us to metrics-based anomalies.

What is an Anomaly and Why Do We Care?

What is an anomaly? If you look in the dictionary, anomalies are defined as:

Figure 2. Anomaly Definition

An anomaly is something that’s not expected – something unusual – not necessarily good or bad, but just different. Now, why do we care about anomalies? Anomalies tell us when to act. We act and touch production systems for two reasons: first, to change or upgrade functionality in a planned manner. The second is to fix something in an unplanned manner. Anomalies help us with those unplanned changes.

Why Metric-based Anomaly Detection vs. Logs-based?

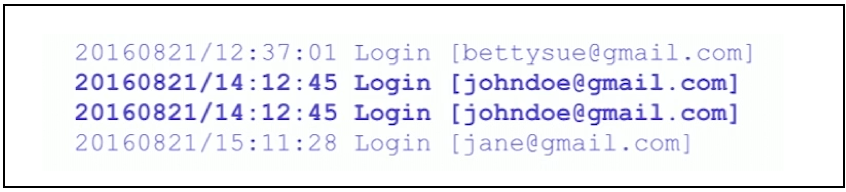

Now why metrics as opposed to events? Events are what you see in logs – occurrences if something happened such as if a user logged in or a webpage was requested. Events are occurrences that have timestamps and arbitrary annotations as well. It’s OK to have duplicate events, too. Below in Figure 3, we have an example of four event lines:

Figure 3. Event Lines Example

The second and third entries are completely identical – same day, same timestamp, same event type, and same user name. Someone just pushed a button twice to log in, and so there are two events here. Any logging system will store those separately as two different events. So, the metaphor for events is a list. It’s a list of things that are weakly connected or unconnected.

Metrics, on the other hand, are not occurrences. A metric is actually a measurement. Metrics have timestamps and annotations, and therefore they look like events, but they’re not. With metrics, you take a measurement, and if you measure the same thing at the same time, you should get one value which means you shouldn’t have duplicates. Duplicates are redundant, and so the metaphor for metrics is actually a function that maps time to value. Events, conversely, don’t need numerical values at all. The login example above doesn’t have numbers at all.

Why is this function metaphor so powerful? Three big reasons. First, we have a whole bunch of mathematical machinery we can bring to bear on top of these functions. The second big reason is that we can vectorize this data, i.e. take all values for a given function, pull them out as a big vector, compress them together, and store them as one giant blob. In that process, you can store tens of thousands of points in one blob, making it far cheaper to store than if they were events. Also, you can retrieve that data much quicker than you would with events as well. Finally, you can also leverage visual intuition – what humans are particularly great at – at scale with functions, which we will show in our many examples.

A Better Way to Define Anomalies in Practice

How should you think about anomalies? Going back to its definition, an anomaly is a complement of normal. There are two ways to define an anomaly. One is directly by mathematical function defining the anomaly. The other way is to define what is normal and say everything else is the anomaly. In practice, the second way – defining what is normal – happens to be much easier.

Figure 4. Anomaly vs. Normal Definition

In part 2 of our four-part series on anomaly detection for cloud-native applications, I’ll start to jump into the examples of metric-driven anomalies, that all can be easily converted into analytics-driven smart alerts. If you want to try Wavefront in the meantime, sign up for our free trial.

Get Started with Wavefront Follow @mikempx Follow @WavefrontHQThe post How to Auto-Detect Cloud App Anomalies with Analytics: 10 Smart Alerting Examples – Part 1 appeared first on Wavefront by VMware.

About the Author

More Content by Mike Johnson