Historically, applications serving large volumes of user transactions were first developed as monolithic applications. Monolithic apps evolved into multi-tier (many of them with multiple components). As these applications grew exponentially, specific problems in maintaining them became apparent.

Teams developing these applications started increasing, although they organized to remain focused only on particular features of each. Upon making code changes, developer groups needed to coordinate their work with other teams. As applications become ever more complex, work between teams and costly failure avoidance became equally hard. Also, different development teams had different delivery timelines, making it evident that they should develop their respective features in a logically separated fashion. Separation would then give more control over their builds, code verification, and features deployment. That’s what brings us to the point when an application needs to become logically split into microservices.

Service Mesh Architectures Need Observability

The configurable network layer for microservices and their interactions is called a service mesh. By itself, a service mesh architecture comes with an inherent lack of visuality, requiring monitoring visibility platforms to couple with it. This is because microservices are often developed in different development environments as specific functionalities are just easier coded with appropriate tools. Actually, that’s more advantage, than a limitation. Likewise, over the application’s lifespan, new tools/languages will evolve and developers will want to take advantage of them.

Monolithic applications don’t go away quickly in most organizations. Replacing a monolith with microservices is usually an incremental process. Taking all of this into account, the service mesh landscape looks very complicated. Different services are introduced, with different tools talking to each other and co-existing with the monolithic application.

So when something fails, how can the developers debug the problem? The root cause can be in the network, on the servers, within containers, or maybe the probable cause is due to a communication miscue between services. Any hardware device can fail, each line of code could be a problem. Protocols governing communication between services can hide the issue within the app in an endless number of protocol messages. Every data packet transferred may contain the field which may be the key to the problem’s solution.

Also, the problem can be even more costly for the organization managing a service mesh. The complexity of debugging gets multiplied by many different tools and coding environments used for service development. How to have a comprehensive view of all the services exposed to all developers when they were developed across such heterogeneous environments?

The solution is to get info on as many communication elements between services as possible. That way it’s irrelevant if many different coding technologies are used in their development. Rich information which can be gathered from service communication allows a broad view of the service mesh’s health.

Istio and Envoy are open source projects which help us to observe and manage the service mesh. Strong enthusiasm from the developers community for Istio and Envoy establish them as an emerging standard in Kubernetes environments.

Envoy, originally built by Lyft, is a high performing proxy used to watch all traffic for all services in the service mesh. It’s deployed as a sidecar to the service in the same Kubernetes pod. Some of the Envoy features include dynamic service discovery, load balancing, health checks, HTTP/2 and gRPC proxies, TLS termination and staged rollouts.

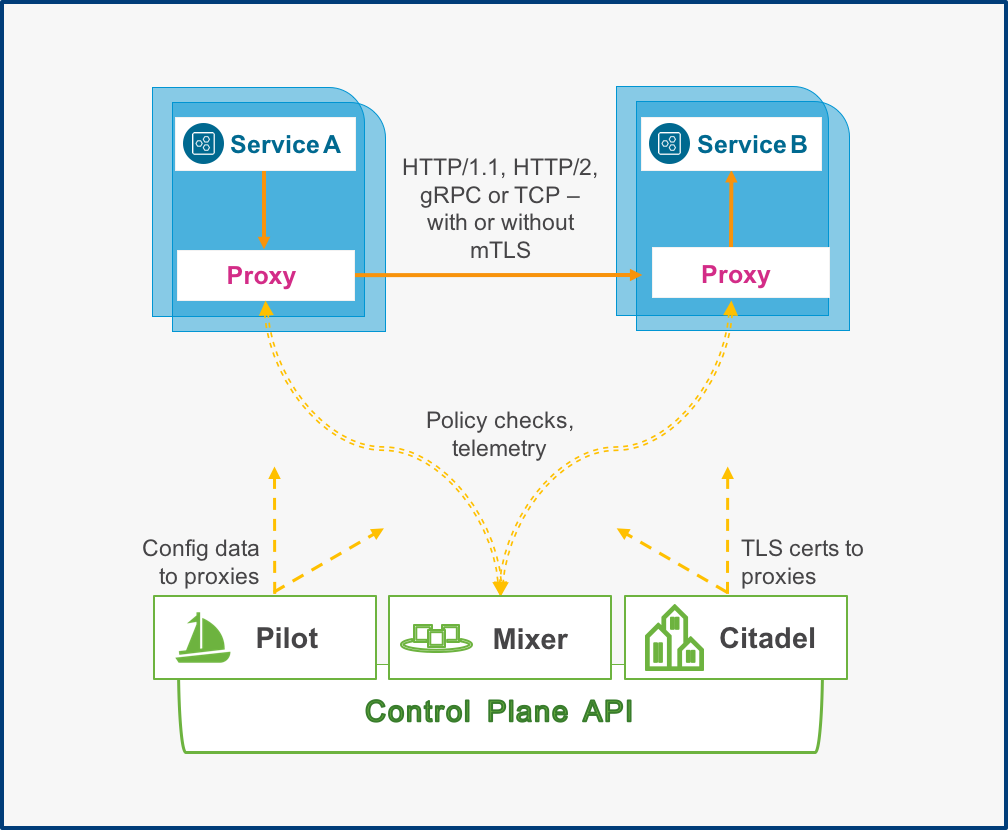

An Istio service mesh architecture is logically split into a control plane and data plane. The data plane consists of Envoy proxies. The control plane manages and configures the proxies to handle the traffic between services. Among its other features, Istio allows control of traffic flow and API calls between services.

Definition of Istio Components

Figure 1 represents the architecture of Istio. The Mixer is an Istio component which enforces access control and usage policies across the service mesh. It also collects telemetry data from the Envoy proxies and other services. The Envoy sidecar calls the Mixer before each service request. The call contains request level attributes which the Mixer evaluates. After each service request, Envoy calls the Mixer to report telemetry. Envoy sidecars use caching and telemetry buffering to minimize the frequency of calls to the Mixer.

Service Mesh Architecture

The Pilot manages all the Envoy proxy instances in an Istio service mesh. It allows specifying rules to use to route traffic between Envoy sidecars. It enables A/B tests and canary deployments. The Pilot allows configuring timeouts, retries, and circuit breakers. It provides service discovery for the Envoy proxies.

The Citadel is used for key and certificate handling. It provides secure service-to-service and end-user authentication. By using the Citadel, users can implement policies based on service identity and not based on network configured rules.

Must-have for Observability: Metrics, Histograms, and Traces

Istio and Envoy are building blocks which must be combined with visibility platforms that collect metrics, histograms, and traces. This combination allows us to have a comprehensive look at the service mesh, and all its components which makes it genuinely manageable. Now data coming from Envoy proxies can be stored and analyzed across dashboards in real time and searched against specific behavioral patterns. By analyzing metrics, histograms, and traces, engineers can dive deep into any particular area that may be causing latencies or errors.

All of this data is truly comprehensive, and it’s essential for debugging during development, deployment, and production. Moreover, it solves the original problem of the move from monolith to the service mesh – observability is paramount; without which there is no successful migration.

Wavefront’s Istio Integration Simplifies Observability

The Wavefront platform was built to provide 3D Observability that’s essential for microservices operations. It includes the ingesting, analyzing and visualizing of metrics, histograms, and traces for modern application environments at an ultra high scale. We’ve recently published the Wavefront Istio integration. Learn more about our Istio integration here. Using this integration, SREs and developers can get visibility into the key performance metrics from their Istio environment, including RED metrics and histogram metric distributions. When you combine the Istio integration with other elements such as Envoy metrics, Kubernetes metrics, and overall cloud infrastructure visibility provided by more than 60 Wavefront cloud integrations, you dramatically improve observability.

In an upcoming follow-on blog, Chhavi Nijhawan will cover Wavefront’s Distributed Tracing capabilities as it relates to Istio and Envoy.

In the meantime, if you want to try the Wavefront Istio integration, please sign up for the Wavefront self-service trial.

Get Started with Wavefront Follow @stela_udo Follow @WavefrontHQThe post Here’s Why You Need Service Mesh Observability appeared first on Wavefront by VMware.

About the Author

Follow on Twitter Follow on Linkedin More Content by Stela Udovicic